Abstract

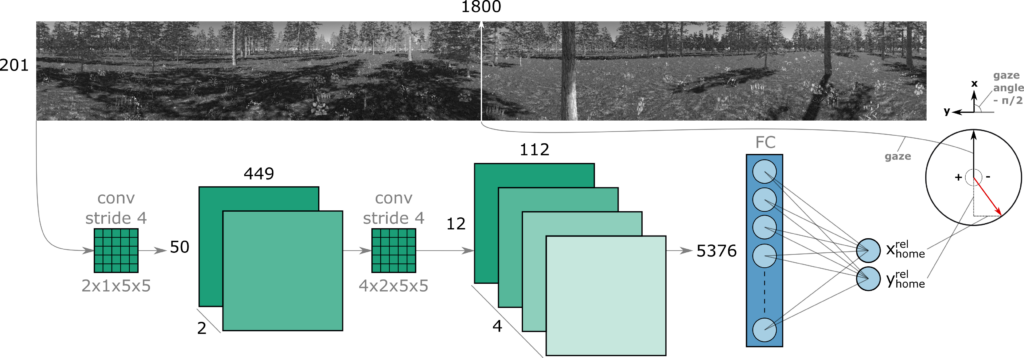

Insects have long been recognized for their ability to navigate and return home using visual cues from their nest’s environment. However, the precise mechanism underlying this remarkable homing skill remains a subject of ongoing investigation. Drawing inspiration from the learning flights of honey bees and wasps, we propose a robot navigation method that directly learns the home vector direction from visual percepts during a learning flight in the vicinity of the nest. After learning, the robot will travel away from the nest, come back by means of odometry, and eliminate the resultant drift by inferring the home vector orientation from the currently experienced view. Using a compact convolutional neural network, we demonstrate successful learning in both simulated and real forest environments, as well as successful homing control of a simulated quadrotor. The average errors of the inferred home vectors in general stay well below the 90° required for successful homing, and below 24° if all images contain sufficient texture and illumination. Moreover, we show that the trajectory followed during the initial learning flight has a pronounced impact on the network’s performance. A higher density of sample points in proximity to the nest results in a more consistent return.

Relevant links

2024 |

Direct learning of home vector direction for insect-inspired robot navigation Miscellaneous 2024. |